What Generative AI Outputs Can Teach Us About Anatomy Misconceptions

My social media feed has always been a little unhinged. Between biology research and anatomy teaching, my algorithm skews more technical and medical than most. Recently, after reading up on senior dog care, it has also picked up a bunch of dog mobility ads. So, when I saw an ad for a dog joint supplement featuring a leg with two symmetrical fibulas, I thought, Oh, that’s funny! Clearly a marketing person used an AI-generated image without checking the accuracy. I saved the image for a rainy day and scrolled on.

But then, I started to notice more.

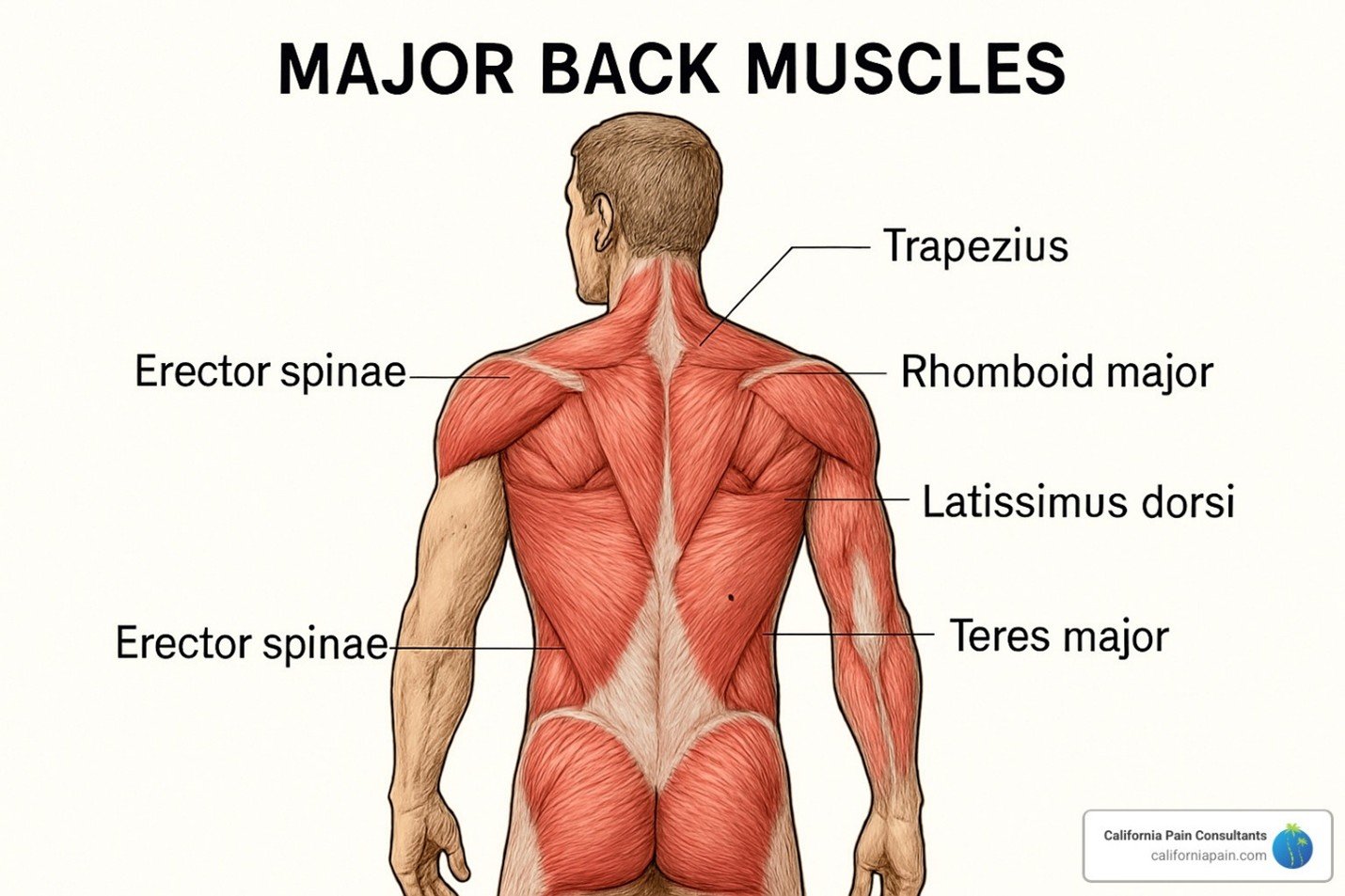

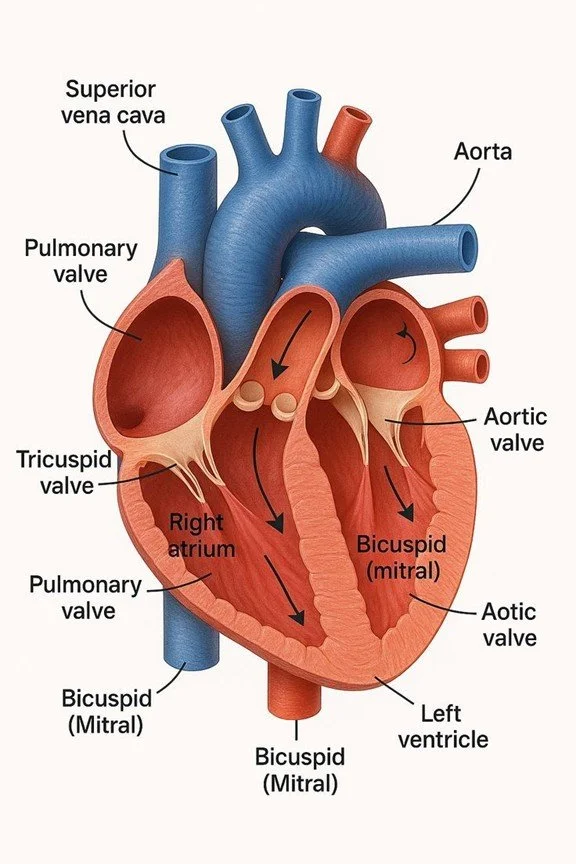

When searching for quick reference images for students, the first page of results (often including images from healthcare practices and education websites) includes AI-generated images that look correct at a thumbnail size. However, at full-size, the issues are obvious: incorrect directionality, distorted edges, duplicated labels, and misplaced structures.

The repeated terms especially felt familiar. They reminded me of grading anatomy lab practical exams, and it has made me wonder: what can generative AI errors teach us about how introductory students think about anatomy?

Why Generative AI Makes Anatomy Mistakes

Outside of anatomical illustrations, we have already observed how generative AI tools struggle with human anatomy (e.g., widely shared examples of AI-generated images that contain inaccurate numbers or shapes of fingers). Publicly available text-to-image generative AI tools (e.g., Midjourney or DALL·E) are not specialized to “learn” anatomy. They generate images by statistically blending patterns from large datasets, creating composites of surface-level visual features. Thus, they inherently cannot assign meaning to anatomical boundaries or edges or maintain internal consistency across a structure. While AI models are improving, research has documented how AI-generated images in anatomy education contexts are riddled with errors and fabrications, especially when it comes to detailed boundaries like muscle attachments.

In short, generative AI tools reproduce surface-level visual averages of what anatomy looks like, not what it is.

Where This Mirrors Student Thinking

While AI learning is not the same thing as human learning, I find it interesting that students often make the same kinds of errors. When students rely on surface-level recognition, they may:

Recall that a term belongs to a region, but misidentify the structure

Struggle to distinguish boundaries between adjacent structures

Misinterpret directionality or spatial relationships

These errors are easier to make when learning anatomy is treated as a memorization task rather than a meaning-making one. To learn anatomy, students need to integrate language, location and orientation, and structure-function relationships. When those elements are not fully integrated, students can make the same errors as those in the AI outputs: associated terms, decoupled from meaning.

Implications of Teaching Practice: Edges, Not Just Locations

Similarly to the mirrored misconceptions, part of the solution lies in how AI or students are trained to think about anatomy. Spatially-conditioned AI tools specialized in 3D reasoning work to interpret layered images, and in anatomy, would likely have a better architecture for connecting attachment points, relative size and location, and directionality. In human development, we know that spatial ability develops hierarchically, as students move from basic perception (size, distance, orientation), to mental mapping (linking language to 3D space), to simulation (predicting movement, function, or interaction). In anatomy education, the challenge is building scaffolds between these levels of understanding, helping students move from recognizing a term or structure to reasoning about what it does, and further, what happens when it is disrupted.

Try In Your Classroom

In my own teaching practice, I now try making the reasoning about boundaries and relationships more explicit for anatomy students, to help reinforce meaning-making in anatomy. To that end, an interesting exercise to have students think more deeply about boundaries may be by having them evaluate AI-generated images.

Ask students:

What is incorrect in this image?

Where are the boundaries between structures unclear or wrong?

What structure/function relationships are misrepresented?

Which labels are misapplied?

How would you correct this?

Continue the Conversation

If you tried this activity or have your own examples of AI anatomy errors worth dissecting, I want to hear about it. And, if you are looking for more strategies for teaching biology, anatomy, and physiology with depth, there is more where this came from.